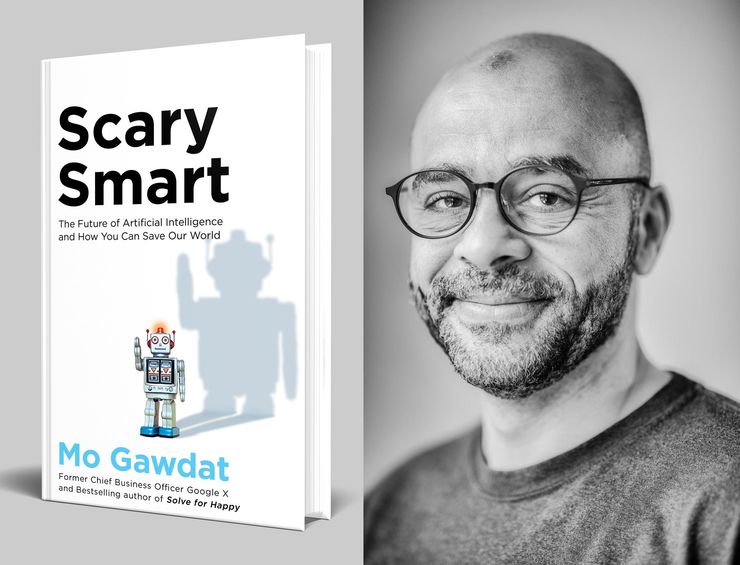

Mo Gawdat on the unstoppable growth of artificial intelligence

'When machines are specifically built to discriminate, rank and categorize, how do we expect to teach them to value equality?'

Mo Gawdat outlines the terrifying future of artificial intelligence and the ethical code we all must teach to machines to avoid it.

Internationally bestselling non-fiction author of Solve for Happy and former chief business officer at Google [X], Mo Gawdat has spent more than three decades at the forefront of technological development. His latest book, Scary Smart provides an ominous warning about artificial intelligence.

AI is already more capable and intelligent than humanity. Today's self-driving cars are better than the average human driver and fifty per cent of jobs in the US are expected to be taken by AI-automated machines before the end of the century. In this urgent piece, Mo argues that if we don’t take action now – in the infancy of AI development – it may become too powerful to control. If our behaviour towards technology remains unchanged, AI could disregard human morals in favour of profits and efficiency, with alarming and far-reaching consequences.

How we act collectively is the only thing that can change the future, and here Mo shows how every one of us can be good to the machines, in the hope that they'll be good to us too.

Self-driving cars have already driven tens of millions of miles among us. Powered by a moderate level of intelligence, they, on average, drive better than most humans. They keep their ‘eyes’ on the road and they don’t get distracted. They can see further than us and they teach each other what each of them learns individually in a matter of seconds. It’s no longer a matter of if but rather when they will become part of our daily life. When they do, they will have to make a multitude of ethical decisions of the kind that we humans have had to make, billions of times, since we started to drive.

For example, if a young girl suddenly jumps in the middle of the road in front of a self-driving car, the car needs to make a swift decision that might inevitably hurt someone else. Either turn a bit to the left and hit an old lady, to save the life of the young girl, or stay on course and hit the girl. What is the ethical choice to make? Should the car value the young more than the old? Or should it hold everyone accountable and not claim the life of the lady who did nothing wrong? What if it was two old ladies? What if one was a scientist who the machines knew was about to find a cure for cancer? What determines the right ethical code then? Would we sue the car for making either choice? Who bears the responsibility for the choice? Its owner? Manufacturer? Or software designer? Would that be fair when the AI running the car has been influenced by its own learning path and not through the influence of any of them?

‘When machines are specifically built to discriminate, rank and categorize, how do we expect to teach them to value equality?’

If Amazon was smart enough to know that I might pay a bit more for a certain object than you, and you might pay a bit more for another object than me, should they be allowed to use that knowledge to maximize profits?

Would we consider this unethical? What if it used that form of intelligence, by monitoring groups of users, to wipe out all the small retailers in your neighbourhood? Would we consider that anticompetitive? What if it ignored your privacy in its hunger for more knowledge? Would we consider this a human rights violation? What if the AI of your bank algorithmically discriminated against you? Patterns and trends may indicate that people from a specific ethnic background tend to have lower credit scores and it felt it would be smarter to deny you a loan. What if the law enforcement machines chose to make my life harder because someone who has the colour of my skin or my religious background has committed a crime? When those machines are specifically built to discriminate, rank and categorize, how do we expect to teach them to value equality?

Let’s face it, AI will not be made to think like the average human. It will be made to think like economists, sales executives, soldiers, politicians and corporations. And like those highly driven subsets of humanity, AI stands the risk of being as biased and blinded by what it measures. You see, it’s not that we can only measure what we see, but rather that we zoom in with tunnel vision and only see what we measure. That reinforces what we see and then we create more of it as a result. And yes, sadly, we are not designing AI to think like a human, we are designing it to think like a man. The male-dominated pool of developers who are building the future of AI today are likely to create machines that favour so-called ‘masculine’ traits.

Is it even possible to kill an AI? Today, if you took a hammer and smashed a computer, your action would be considered wasteful but it is not a crime. What if you kill an AI that has spent years developing knowledge and living experiences? Because it’s based on silicon, while we are based on carbon, does that make it less alive? What if we, with more intelligence, managed to create biologically based computer systems, would that make them human? What you’re made of (if you have intelligence, ethics, values and experiences) should not matter, just as much as it should not matter if your skin is light or dark. Should it? How would the machines that we discriminate against react? What will they learn if we value their lives as lesser than ours? What if the machines felt that the way we treated them was a form of slavery (which it would be)? Humanity’s arrogance creates the delusion that everything else is here to serve us. Like the cows, chickens and sheep that we slaughter by the tens of billions every year. What if cows became superintelligent? What do you think their view of the human race would be? What will a machine’s view of the human race be if it witnesses the way we treat other species? If it develops a value system that prevents humanity from raising animals like products to fill our supermarket shelves and restricts us from doing it, would we think of this as a dictatorship?

‘The answer to how we can prepare the machines for this ethically complex world resides in the way we raise our own children and prepare them to face our complex world’

The answer to how we can prepare the machines for this ethically complex world resides in the way we raise our own children and prepare them to face our complex world. When we raise children, we don’t know what exact situations they will face. We don’t spoon-feed them the answer to every possible question; rather, we teach them how to find the answer themselves. AI, with its superior intelligence, will find the righteous answer to many of the questions it is bound to face on its own. It will find an answer that, I believe, will align with the intelligence of the universe itself – an answer that favours abundance and that is pro-life. This is the ultimate form of intelligence. We, however, need to accelerate this path or, at the very least, stop filling it with stumbling blocks that result from our own confusion. My incredibly wise ex, Nibal, once told me when our kids were young: ‘They are not mine, I don’t have the right to raise them to be what I want them to be. I am theirs. I am here to help them find a path to reach their own potential and become who they were always meant to be.

AI is coming. We can’t prevent it but we can make sure it is put on the right path in its infancy. We should start a movement, but not one that attempts to ban it [. . .] nor tries to control it [. . .]. Instead, we can support those who create AI for good and expose the negative impacts of those who task AI to do any form of evil. Register our support for good and our disagreement with evil so widely that the smart ones (by smart ones I mean, of course, the machines, not the politicians and business leaders) unmistakably understand our collective human intention to be good. How do you do that? It’s simple.

We should demand a shift in AI application so that it is tasked to do good – not through votes and petitions, which are bureaucracies that never lead to anything more than a count and often defuse the energy behind the cause – but through our actions, consistency and economic influence. In our conversations, posts on social media and articles in the mainstream media we can object to the use of AIs in selling, spying, gambling and killing. We can boycott those who produce sinister implementations of AI – including the major social media players – not by ignoring them and going off the grid but by avoiding their negative parts and using their good parts consistently. For example, I refuse to swipe and mindlessly click on things that Facebook or Instagram show me unless I am mindfully aware that it is something that will enrich me. I resist the urge to click on the videos of women doing squats or showing their sexy figures and six-packs because, when I did this a few times, my entire feed became full of them, instead of the self-improving, spiritual or scientific content that I actually want to see more of. When AI shows me ads that are irrelevant, I skip them, so the AI knows not to waste my time. When it shows them repeatedly, I let them run to exhaust the budget of the annoying advertiser and confuse the AI that is not working in my favour. When I produce social media content, I produce it with you, the viewer, and not an algorithm, in mind. I don’t aim for likes but for the value that you will receive. That way, I don’t play by the rules set for me, but by the values I believe in. None of that makes any difference to the bigger picture but that is because at the moment I am one of only a minority who is behaving in this way. If all of us did this, the machine would change.

‘If we make it clear that we welcome AI into our lives only when it delivers benefit to ourselves and to our planet, and reject it when it doesn’t, AI developers will try to capture that opportunity.’

If we all refuse to buy the next version of the iPhone, because we really don’t need a fancier look or an even better camera at the expense of our environment, Apple will understand that they need to create something that we actually need. If we insist that we will not buy a new phone until it delivers a real benefit, like helping us make our life more sustainable or improving our digital health, that will be the product that is created next. Similarly, if we make it clear that we welcome AI into our lives only when it delivers benefit to ourselves and to our planet, and reject it when it doesn’t, AI developers will try to capture that opportunity. Keep doing this consistently and the needle will shift.

What will completely sway the needle, however, is when AI itself understands this rule of engagement – do good if you want my attention – better than the humans do. So don’t approve of killing machines, even if you are patriotic and they are killing on behalf of your own country. Don’t keep feeding the recommendation engines of social media with hours and hours of your daily life. Don’t ever click on content recommended to you, search for what you actually need and don’t click on ads. Don’t approve of FinTech AI that uses machine intelligence to trade or aid the wealth concentration of a few. Don’t share about these on your LinkedIn page. Don’t celebrate them. Stop using deepfakes – a video of a person in which their face or body has been digitally altered so that they appear to be someone else. Resist the urge to use photo editors to change your own look. Never like or share content that you know is fake. Disapprove publicly of any kind of excessive surveillance and the use of AI for any form of discrimination, whether that’s loan approval or CV scanning. Use your judgement. It’s not that hard. Reject any AI that is tasked to invade your privacy in order to benefit others, or to create or propagate fake information, or bias your own views, or change your habits, or harm another being, or perform acts that feel unethical. Stop using them, stop liking what they produce and make your position – that you don’t approve of them – publicly clear.

At the same time, encourage AI that is good for humanity. Use it more. Talk about it. Share it with others and make it clear that you welcome these forms of AI into your life. Encourage the use of self-driving cars, they make humans safer. Use translation and communication tools, they bring us closer together. Post about every positive, friendly, healthy use of AI you find, to make others aware of it.

Image credit: Humberto Tan

Scary Smart

by Mo Gawdat

In Scary Smart, The former chief business officer of Google outlines how artificial intelligence is way smarter than us, and is predicted to be a billion times more intelligent than humans by 2049. Free from distractions and working at incredible speeds, AI can look into the future and make informed predictions, looking around corners both real and virtual.

But AI also gets so much wrong. Because humans design the algorithms that form AI, there are imperfect flaws embedded within them that reflect the imperfection of humans. Mo Gawdat, drawing on his unparalleled expertise in the field, outlines how and why we must alter the terrifying trajectory of AI development and teach ourselves and our machines to live better.

You might also like . . .

Code Dependent

by Madhumita Murgia

Love it or loathe it, you can’t escape it. Talk of AI is everywhere. In Code Dependent, Madhumita Murgia, AI Editor at the FT offers a laser-sharp examination of how AI is changing our jobs, our lives, our futures and even what it means to be human. Through compelling storytelling, Murgia shares how AI is shaping individuals' people, and what we need to do to reclaim our humanity. If you read one book about AI this year, make it this one.

Supremacy

There’s no question that AI is changing the world in ways we couldn’t have imagined even a few decades ago. But how did we get to where we are now – an unregulated AI market dominated by the world’s biggest tech corporations, with legislators and governments racing to understand the implications for the future of our economies, education systems, and life as we know it? In Supremacy, award-winning journalist Parmy Olson tells the extraordinary story of how two competing AI companies went from trying to solve humanity’s biggest challenges to being controlled by Google and Microsoft, forced to bow to the pressures of these tech giants’ relentless pursuit of money and power.